IDENTIFY DISTORTION

THREATENING TRUTH

THREATENING TRUTH

Identifying distortion that threatens truth is a core skill for clear thinking—especially in an era of abundant information, incentives to mislead, and human cognitive quirks. Distortion isn’t always a lie; it can be omission, exaggeration, framing, or subtle bias that quietly warps reality. The goal is to spot what undermines accurate understanding of what’s real, not just what feels wrong.

Here’s a practical, step-by-step framework you can apply to any claim, narrative, article, speech, data set, or argument:

1. Check the foundation: Evidence and falsifiability

Is The Claim Valid?

Can It Be Disproven?

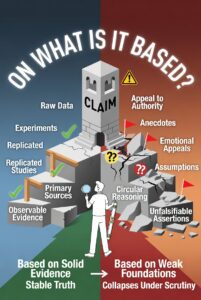

On What Is It Based?

Does the claim rest on testable, observable evidence (data, experiments, direct records) or on appeals to authority, emotion, or “everyone knows this”?

The seeker represents epistemic vigilance—actively testing rather than passively accepting. The scale emphasizes weighing evidence over narrative. The distortions on the right highlight the common threats (motive, emotion, selective facts) that can make a claim feel true while undermining its validity.

You can use this as a mental model or even as a reminder when evaluating news, arguments, or social media claims.

If no conceivable evidence could refute it, it’s likely unfalsifiable dogma (a classic distortion tactic).

The principle of falsifiability, which is one of the most powerful tools for identifying distortion and protecting truth.

The scene shows a scientist/truth-seeker standing in front of two large doors. One door (bright, open pathway) is labeled “Falsifiable – Can Be Disproven” and leads to clear evidence, experiments, and reality checks. The other door (dark, locked) is labeled “Unfalsifiable – Cannot Be Disproven” and is surrounded by warning symbols of dogma, circular reasoning, and shifting goalposts. A large glowing question “CAN IT BE DISPROVEN?” dominates the top, with a broken chain on the falsifiable side and an unbreakable loop on the unfalsifiable side.

Falsifiability matters: claims that cannot be tested or potentially disproven often hide distortion, whether intentional or not. It’s a strong visual reminder that real truth-seeking requires the possibility of being wrong.

Red flag: Heavy reliance on anecdotes, secret knowledge, or “trust the experts” without showing the raw data/methods.

Focus on the foundation of a claim and exposing whether it’s built on solid evidence or shaky ground.

The scene shows a large structure (like a tower or claim) being examined by a truth-seeker with a magnifying glass and level tool. One side has a strong, stable foundation made of solid blocks labeled with evidence types (raw data, experiments, replicated studies, primary sources). The other side has a weak, crumbling foundation with warning symbols for common distortions (authority appeals, anecdotes, emotions, assumptions, circular reasoning). A prominent question “ON WHAT IS IT BASED?” arches over the top, with pathways showing outcomes: stable truth vs. collapse/distortion.

2. Examine internal and external consistency

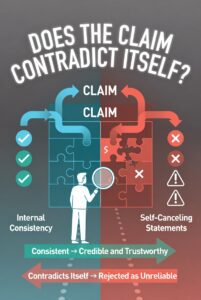

Does the claim contradict itself or its own premises?

The power of spotting internal inconsistencies as a key tool for identifying distortion and protecting truth.

The scene shows a large “CLAIM” banner or structure being examined by a truth-seeker. One side of the claim appears coherent and logically connected (smooth, harmonious elements with green checkmarks), while the other side visibly breaks apart or clashes with itself (clashing arrows pointing in opposite directions, broken logic chains, red “X” marks, and puzzle pieces that don’t fit). A prominent glowing question “DOES THE CLAIM CONTRADICT ITSELF?” arches over the top, with pathways showing outcomes: logical consistency leads to credibility, while self-contradiction leads to rejection.

This serves as a strong visual reminder that even eloquent or emotionally appealing claims can fall apart under scrutiny if they contain internal contradictions. Self-contradiction is often one of the quickest ways to detect distortion.

Does it clash with well-established, replicated facts from unrelated sources? (Cross-check against primary sources, not summaries.)

Emphasize the importance of checking a claim against established, observable reality.

The scene shows a large “CLAIM” shield or structure being examined by a truth-seeker. One side seamlessly aligns with solid, well-documented facts (green checkmarks, interlocking puzzle pieces with data charts, experiments, and historical records). The other side violently clashes and breaks apart when forced against the same facts (red “X” marks, sparks flying, cracked surfaces, and warning symbols). A prominent glowing question “DOES IT CLASH WITH FACTS?” arches over the top, with clear pathways: alignment with facts leads to validity, while clashes lead to rejection.

No matter how compelling a claim sounds, if it directly contradicts well-established facts, it cannot hold up under scrutiny. It’s a visual reminder to always cross-check against reality rather than accepting narratives in isolation.

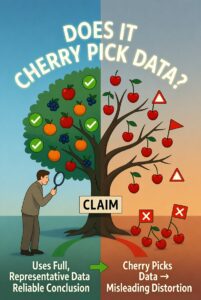

Red flag: Selective use of facts—cherry-picking data that fits while ignoring contradictory evidence (the “Texas sharpshooter” fallacy).

This is one of the most common and sneaky forms of distortion.

The image shows a truth-seeker examining a large tree labeled “CLAIM”. On the left side, the tree has a healthy, balanced set of branches with full, diverse fruit representing comprehensive data. On the right side, the branches are stripped bare except for a few carefully selected ripe cherries (cherry-picked data), while the rest of the fruit lies discarded on the ground. A prominent glowing question “DOES IT CHERRY PICK DATA?” arches over the top, with pathways indicating outcomes: full data leads to truth, while cherry-picking leads to misleading conclusions.

A claim may look appealing if you only look at the hand-picked “cherries,” but it falls apart when you see the full picture of the data that’s been conveniently ignored.

3. Identify motive and incentives

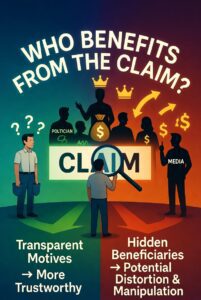

Who benefits if the claim is believed? (Money, power, status, clicks, ideological victory.)

Always follow the incentives when evaluating any claim.

The scene shows a large glowing “CLAIM” banner being examined by a truth-seeker with a magnifying glass. Behind the claim stands a group of shadowy beneficiary figures (politician, corporation executive, activist, media personality) collecting money bags, power symbols, and influence icons flowing from the claim. On the opposite side, the average person (representing the public) stands with empty pockets and question marks, highlighting the hidden motives. A prominent glowing question “WHO BENEFITS FROM THE CLAIM?” arches over the top, with clear pathways: transparent motives support truth-seeking, while hidden beneficiaries signal potential distortion.

This is one of the most effective ways to detect when a claim might be shaped more by incentives than by truth.

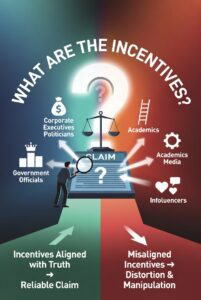

Follow the incentives: Media, governments, corporations, activists, and even academics have skin in the game.

Incentives shape claims and often drive distortion.

The scene features a large central “CLAIM” document or platform being scrutinized by a truth-seeker with a magnifying glass. Surrounding the claim are glowing arrows and icons clearly mapping out the incentives: money bags flowing to corporations and politicians, power symbols (crowns, podiums) for activists and governments, career advancement ladders for academics and media figures, and social approval (likes/hearts) for influencers. On the opposite side, the truth-seeker stands with a scale weighing genuine truth against these incentive-driven influences. A prominent glowing question “WHAT ARE THE INCENTIVES?” arches over the top, with pathways showing outcomes: aligned incentives with truth vs. misaligned incentives leading to distortion.

Understanding “who gains what” is essential for separating truth from motivated reasoning.

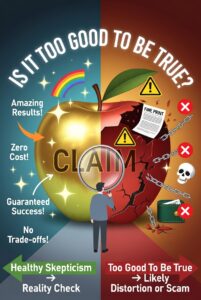

Red flag: The distortion aligns too perfectly with the source’s tribal interests or funding. Truth usually has rough edges.

This is a classic red-flag question that helps expose overly optimistic claims, hidden trade-offs, and potential distortions.

The scene shows a large, shiny “CLAIM” presented as a golden apple or tempting offer, glowing with promise. A truth-seeker stands in front, examining it closely with a magnifying glass. One side reveals a beautiful, perfect exterior (rainbows, sparkles, “Free!”, “Guaranteed Results!”, “No Downsides!”). The other side cracks open to show hidden costs, fine print, broken promises, and warning symbols pouring out. A prominent glowing question “IS IT TOO GOOD TO BE TRUE?” arches over the top, with pathways leading to “Healthy Skepticism → Reality Check” versus “Naive Acceptance → Disappointment.”

Extraordinary claims that sound perfect often hide serious costs or impossibilities. It’s a strong visual cue to pause and dig deeper whenever something seems unrealistically beneficial.

4. Spot rhetorical and linguistic tricks

Loaded language, euphemisms, or vague terms that smuggle in assumptions (“mostly peaceful,” “safe and effective,” “existential threat”).

Emotionally charged or biased words can distort truth.

The scene shows a large “CLAIM” speech bubble or document being examined by a truth-seeker with a magnifying glass. One side uses neutral, factual language (clean blue-green tones with straightforward text like “Observed Data Shows…”). The other side is overloaded with loaded, manipulative language (red-orange tones exploding with fiery words like “Disastrous!”, “Heroic!”, “Evil Regime!”, “Miraculous!”, “Crisis!”, and emotional symbols). A prominent glowing question “DOES IT CONTAIN LOADED LANGUAGE?” arches over the top, with pathways showing outcomes: neutral language supports clear thinking, while loaded language signals emotional manipulation and potential distortion.

Loaded language sneaks in bias and emotion, making a claim feel true without providing real evidence. It’s an excellent reminder to strip away the charged words and examine the actual substance.

Emotional manipulation: Fear, outrage, moral superiority, or ridicule instead of evidence.

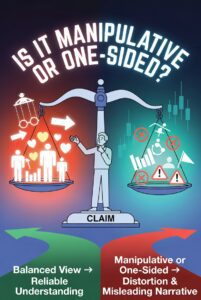

Framing: Presenting only one side of a trade-off as if it’s cost-free.

Vlaims can distort truth by presenting only one perspective while ignoring counter-evidence or trade-offs.

The scene shows a large “CLAIM” as a stage or scale being examined by a truth-seeker. One side is heavily tilted and overloaded with glowing positive elements, emotional appeals, and hero/villain framing (one-sided manipulation). The other side is balanced, showing multiple perspectives with both pros/cons and counter-evidence. A prominent glowing question “IS IT MANIPULATIVE OR ONE-SIDED?” arches over the top, with pathways showing outcomes: balanced view leads to reliable understanding, while manipulative/one-sided presentation leads to distortion.

This image serves as a powerful reminder to always check whether a claim fairly represents reality or is pushing a single narrative through selective emphasis, emotional framing, or omission of opposing facts.

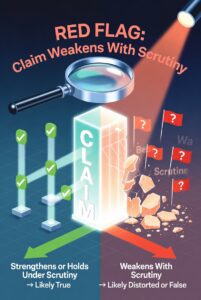

Red flag: The claim gets stronger with repetition or social pressure, but weaker under scrutiny.

Genuine truth usually holds up or even strengthens when examined closely, while distorted or false claims tend to crumble, fade, or reveal cracks the moment serious scrutiny is applied.

5. Test against your own biases

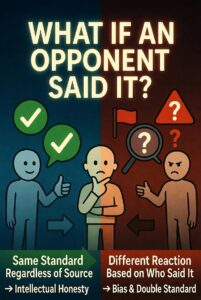

Ask: “Would I accept this exact reasoning if it came from the opposite side?”

This is a powerful mental tool (often called the “reversibility test” or “ideological Turing test”) for detecting bias, double standards, and distortion in claims.

This illustrates one of the best tests for intellectual honesty: Would you accept this exact claim and reasoning if it came from the opposing side? If your reaction changes dramatically based on the source rather than the substance, that’s a strong sign of bias or distortion at work.

Would you like any adjustments — more dramatic contrast between the two sides, different figures, or added text labels? Just let me know!

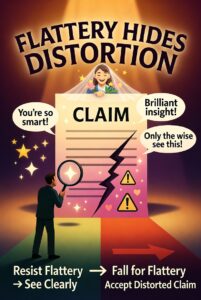

Distortion often feels invisible when it flatters your priors (confirmation bias).

This is a strong visual warning about how praise, ego-stroking, and feel-good language are often used to conceal weak or manipulative claims.

Flattery works as a subtle tool of distortion: it lowers our defenses and makes us more likely to accept claims we would otherwise scrutinize. The key lesson is to stay vigilant even (especially) when a claim makes us feel smart, special, or morally superior.

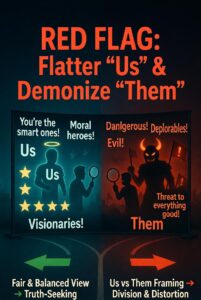

Red flag: The source flatters “us” and demonizes “them” without nuance.

One of the most common and effective tactics used to spread distortion and manipulate groups.

The classic tribal manipulation tactic: making “our side” feel superior and virtuous while painting the other side as dangerous or immoral. It lowers critical thinking and makes people more willing to accept distorted claims that flatter their group identity.

6. Seek the strongest counter-arguments and primary sources

Actively hunt for the best steelman of the opposing view (not the weakest strawman).

A powerful truth-seeking practice that encourages intellectual honesty by actively seeking the best version of the opposing argument (steelmanning).

The importance of steelmanning — deliberately finding and engaging with the strongest version of the opposing view rather than the weakest. Doing so leads to clearer thinking and greater alignment with truth.

Demand raw data, original studies, full transcripts—not summaries or “fact-checks” that often distort via omission.

The importance of stripping away narrative, emotion, opinion, and distortion to focus solely on verifiable evidence.

This is a strong visual reminder to cut through the noise and demand just the facts — nothing more, nothing less.

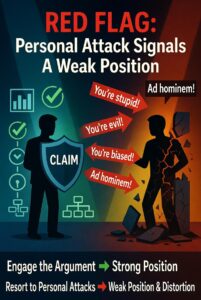

Red flag: The source attacks critics personally instead of engaging the substance (ad hominem).

A classic truth-seeking principle: when someone shifts from attacking the argument to attacking the person, it’s usually because their position cannot withstand scrutiny.

Quick diagnostic checklist (use it like a mental checklist)

- Is the claim extraordinary? Does it require extraordinary evidence?

- Is complexity being flattened into a simple hero/villain story?

- Does the distortion rely on future predictions dressed as certainty (“by 2030…”)?

- Has the source been wrong before in the same direction (track record matters)?

- Would an honest, competent person trying to maximize truth say this exactly this way?

Truth-seeking isn’t about being “open-minded” to everything—it’s about ruthless calibration to reality. Distortions thrive in low-accountability environments (social media, partisan media, bureaucracies). The antidote is epistemic humility + relentless verification + willingness to update when better evidence appears.

This framework scales from everyday conversations to major public debates. Practice it daily and it becomes second nature. The biggest threat to truth isn’t the loud lie—it’s the quiet, comfortable distortion that feels true because it fits the narrative. Spot that, and you’re already ahead.