IDENTIFY DISTORTION

THREATENING TRUTH

THREATENING TRUTH

Identifying distortion that threatens truth is a core skill for clear thinking—especially in an era of abundant information, incentives to mislead, and human cognitive quirks. Distortion isn’t always a lie; it can be omission, exaggeration, framing, or subtle bias that quietly warps reality. The goal is to spot what undermines accurate understanding of what’s real, not just what feels wrong.

Here’s a practical, step-by-step framework you can apply to any claim, narrative, article, speech, data set, or argument:

1. Check the foundation: Evidence and falsifiability

Is The Claim Valid?

Can It Be Disproven?

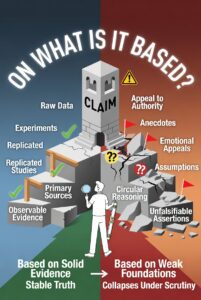

On What Is It Based?

Does the claim rest on testable, observable evidence (data, experiments, direct records) or on appeals to authority, emotion, or “everyone knows this”?

If no conceivable evidence could refute it, it’s likely unfalsifiable dogma (a classic distortion tactic).

Red flag: Heavy reliance on anecdotes, secret knowledge, or “trust the experts” without showing the raw data/methods.

2. Examine internal and external consistency

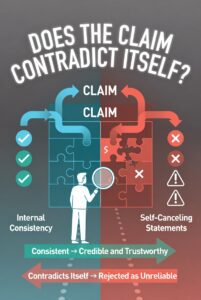

Does the claim contradict itself or its own premises?

Does it clash with well-established, replicated facts from unrelated sources? (Cross-check against primary sources, not summaries.)

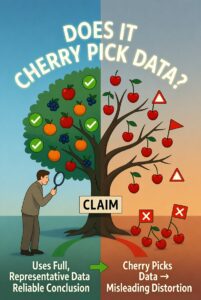

Red flag: Selective use of facts—cherry-picking data that fits while ignoring contradictory evidence (the “Texas sharpshooter” fallacy).

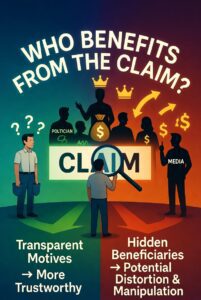

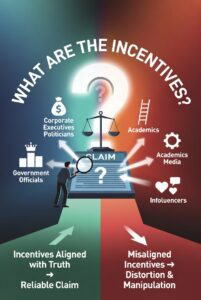

3. Identify motive and incentives

Who benefits if the claim is believed? (Money, power, status, clicks, ideological victory.)

Follow the incentives: Media, governments, corporations, activists, and even academics have skin in the game.

Red flag: The distortion aligns too perfectly with the source’s tribal interests or funding. Truth usually has rough edges.

.

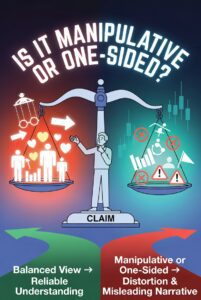

4. Spot rhetorical and linguistic tricks

Loaded language, euphemisms, or vague terms that smuggle in assumptions (“mostly peaceful,” “safe and effective,” “existential threat”).

Emotional manipulation: Fear, outrage, moral superiority, or ridicule instead of evidence.

Framing: Presenting only one side of a trade-off as if it’s cost-free.

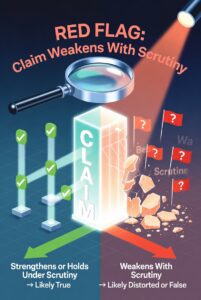

Red flag: The claim gets stronger with repetition or social pressure, but weaker under scrutiny.

5. Test against your own biases

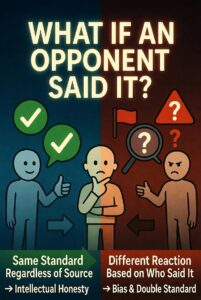

Ask: “Would I accept this exact reasoning if it came from the opposite side?”

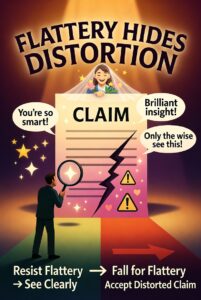

Distortion often feels invisible when it flatters your priors (confirmation bias).

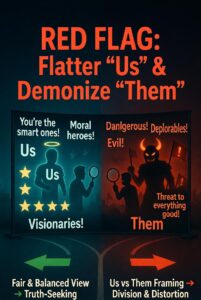

Red flag: The source flatters “us” and demonizes “them” without nuance.

6. Seek the strongest counter-arguments and primary sources

Actively hunt for the best steelman of the opposing view (not the weakest strawman).

Demand raw data, original studies, full transcripts—not summaries or “fact-checks” that often distort via omission.

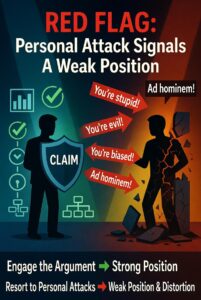

Red flag: The source attacks critics personally instead of engaging the substance (ad hominem).

Quick diagnostic checklist (use it like a mental checklist)

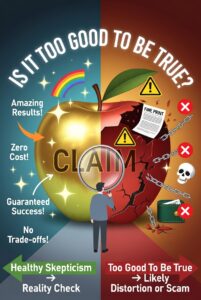

- Is the claim extraordinary? Does it require extraordinary evidence?

- Is complexity being flattened into a simple hero/villain story?

- Does the distortion rely on future predictions dressed as certainty (“by 2030…”)?

- Has the source been wrong before in the same direction (track record matters)?

- Would an honest, competent person trying to maximize truth say this exactly this way?

Truth-seeking isn’t about being “open-minded” to everything—it’s about ruthless calibration to reality. Distortions thrive in low-accountability environments (social media, partisan media, bureaucracies). The antidote is epistemic humility + relentless verification + willingness to update when better evidence appears.

This framework scales from everyday conversations to major public debates. Practice it daily and it becomes second nature. The biggest threat to truth isn’t the loud lie—it’s the quiet, comfortable distortion that feels true because it fits the narrative. Spot that, and you’re already ahead.